Augmented Reality (AR) App: Product Design

The challenge

The challenge

How might a new AR app be made to feel familiar and intuitive?

About the Project

About the Project

Discussions pertaining to AR tend to revolve around technology solutions. These solutions are quickly becoming more feasible with the introduction of AR Kit (iOS) and ARCore (Android). However, the greatest challenge moving forward may be the user experience of such applications.

A myriad of challenges confront designers when creating AR apps, one of which being the construction of new mental models. We construct models based on our related, past experiences: the books we’ve read, the movies we’ve watched, the conversations we’ve had. We build mental models of software much in the same way. Google helps shape the mental model for search. Likewise, Amazon does for e-commerce; eBay does for auctions; twitter does for microblogging; and Microsoft Excel does for spreadsheets. But for AR? The models are yet to be determined.

Leveraging Existing Mental Models

The revolution of digital photography was hastened by leveraging the mental models of analog photography – the emulation of a viewfinder, the display of a filmstrip, the flash of a bulb, and the sound of a shutter. To introduce users to AR, we may find our best, closest mental model of AR is digital photography. When taking a photo, we rely on a device to capture and display what we see. When using AR, we rely on a device to capture and alter what we experience.

"When taking a photo, we rely on a device to capture and display what we see. When using AR, we rely on a device to capture and alter what we experience."

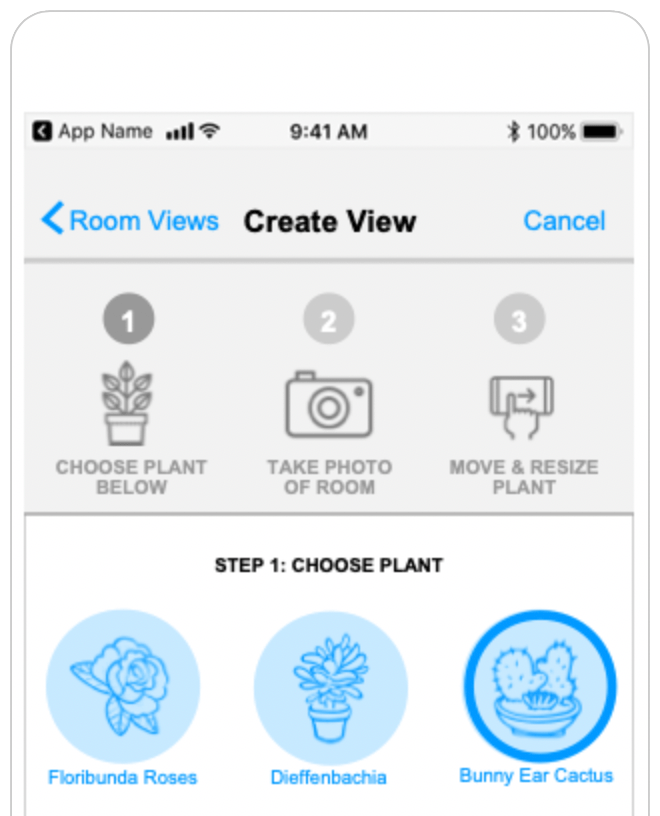

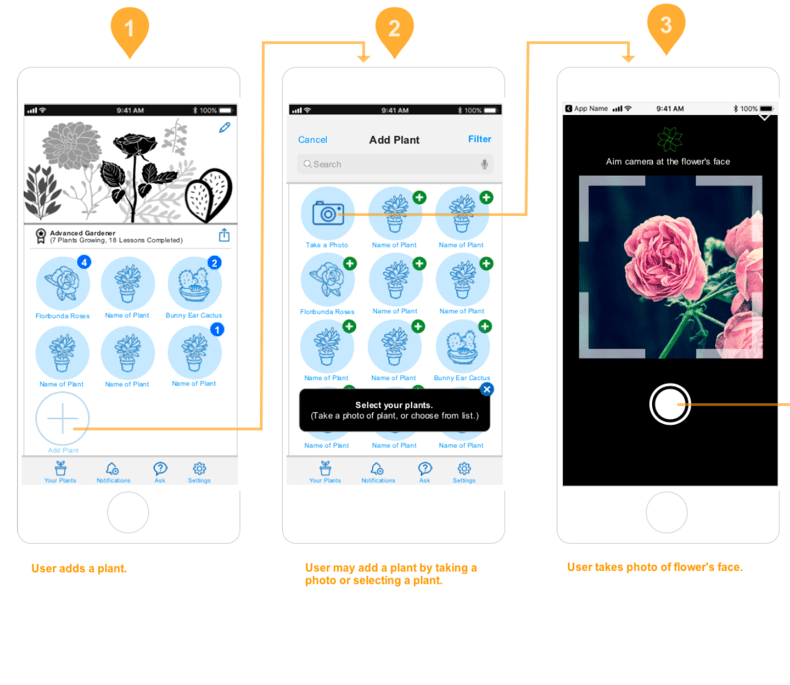

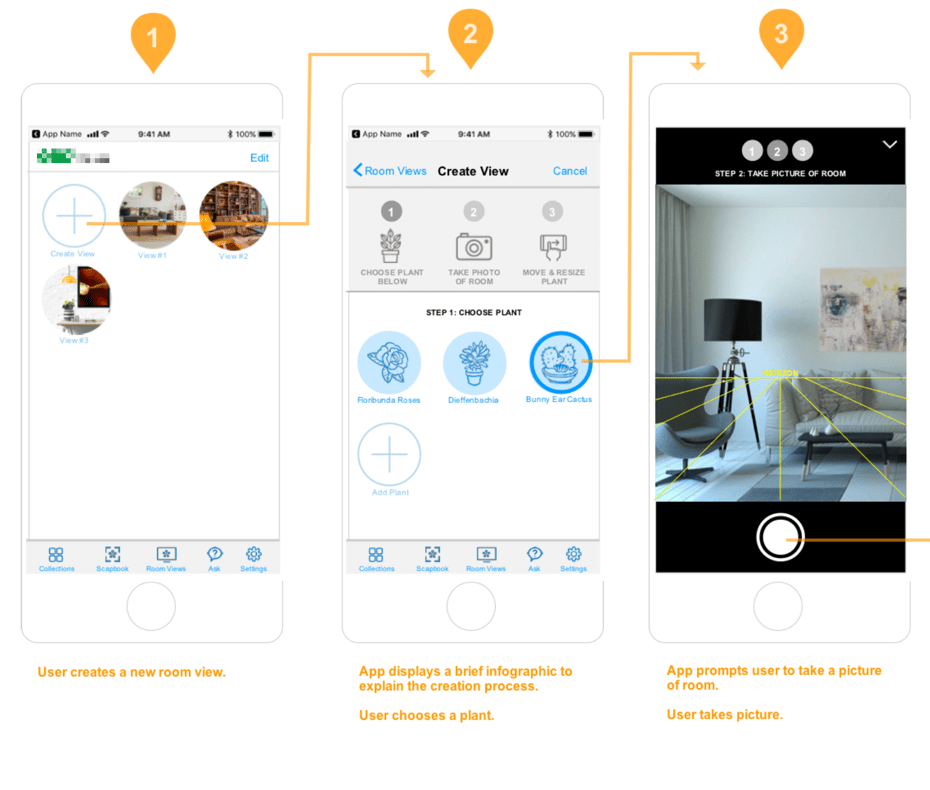

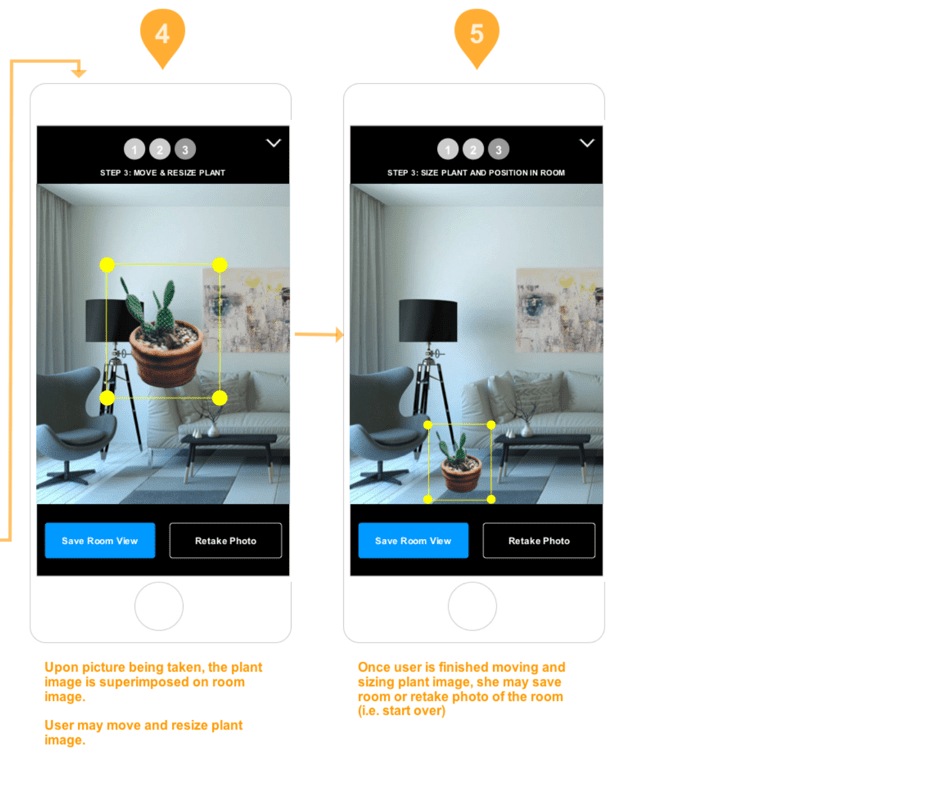

The following are wireframes for a home and garden app. (The client’s name is withheld.) You’ll notice the app borrows conventions from digital photography. The conventions establish a familiarity with the new app’s UX, including the ability to save a still image from within the AR visualization.

Cropped wireframe showing stepwise model/scene capture

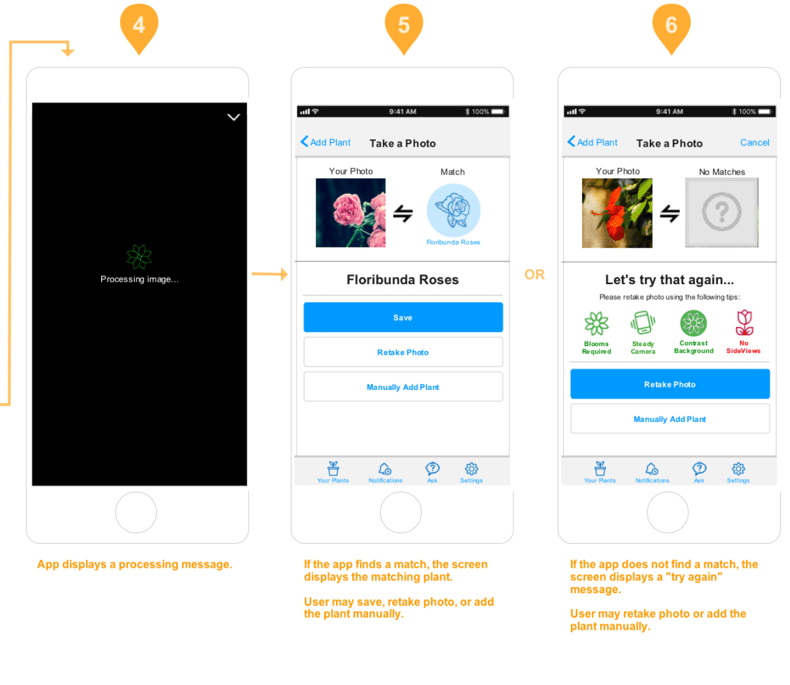

Image capture of a plant

The user first captures an image of a plant. When possible, the plant is identified and matched to a corresponding 3d model.

( View series 1-3 in new tab ) ( View series 4-6 in new tab )

( View series 1-3 in new tab ) ( View series 4-6 in new tab )

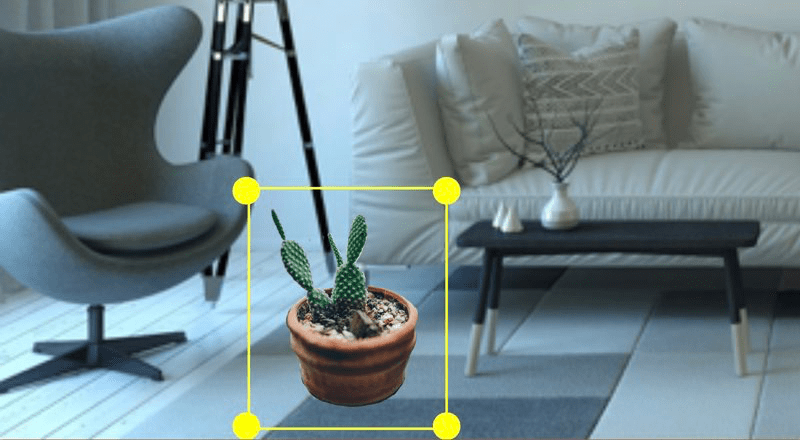

Superimposed 3d model

The user chooses a plant and captures a view of her room. The app superimposes the 3d model based on the device’s position.

( View series 1-3 in new tab ) ( View series 4-5 in new tab )

( View series 1-3 in new tab ) ( View series 4-5 in new tab )